AT&T Futurist Report – A Faster, Smarter Future in 5G

INTRODUCTION

The difference between bits and atoms is blurring. Over the next decade, the network will become an overlay on top of our physical world. The virtual and real will blend in deeply immersive experiences that bring us closer together, no matter where we may be. The world will explain itself through augmented reality. As we imbue everyday objects with computer intelligence and communication capabilities, the Internet of Things will spring to life with myriad devices communicating with us, and each other. Pervasive sensor networks will open new vistas on our planet. Every object, every interaction, and every observation will become a piece of data to inform advanced simulations. Meanwhile, we’ll soon be sharing our offices, hospitals, schools, roads, and homes with a new breed of companion: intelligent robots. Over the next decade, we’ll see these intelligent machines in a different light and reconsider how we see ourselves.

These transformations will be driven by the proliferation of 5G networks and edge computing. The 5G networks on the horizon will be dramatically faster than today’s wireless infrastructure, but they’ll also break the barriers of network latency, the period between when your device requests data from the cloud and the network sends that data. As a result, the massive amount of near real-time data crunching necessary for your smartphone to render a convincing virtual world or for a robot to learn how to clean your house can be moved to the network. The model, called edge computing, essentially makes it possible for any connected device to have the power of a supercomputer.

“Edge computing fulfills the promise of the cloud to transcend the physical constraints of our mobile devices,” says Andre Fuetsch, president of AT&T Labs and AT&T’s chief technology officer. “The capabilities of tomorrow’s 5G are the missing link that will make edge computing possible.”

With that in mind, we present the following forecast of applications at the intersection of 5G and edge computing. (A prior AT&T Futurist Report titled “Blended Reality” specifically explored the future of entertainment.)

The emerging technologies of 5G and edge computing promise an unprecedented opportunity to augment and elevate the human experience. The rest is up to us.

About This Report

To create this latest installment in AT&T’s Futurist Report series, “A Faster, Smarter Future: Emerging Applications for 5G and Edge Computing,” AT&T collaborated with Institute for the Future (IFTF), which drew from its ongoing deep research in mobile computing, wireless networking, and artificial intelligence, along with adjacent and intersecting areas, from human-computer collaboration to “the Internet of Actions.” IFTF then conducted interviews with experts in academia and industry for their divergent viewpoints. Informed by that research, IFTF synthesized five big stories likely to play out over the next decade at the intersection of 5G networks and edge computing. Each of these big stories is supported by six “Signals,” present-day examples that indicate directions of change. Think of a Signal as a signpost pointing toward the future.

AT&T and IFTF hope that the foresight contained in this Futurist Report provokes insight that drives strategic action in the present.

Predictions

Prediction: Learning Flows

Education will take place in continuous and context-aware mobile learning channels blending digital and physical experiences.

“The future of education technology is taking learning out of the pages of books and making it part of your world.” —Maya Georgieva, Director of Digital Learning, The New School and Cofounder, Digital Bodies

High-speed mobile networks and artificial intelligence at the network’s edge will draw education out of episodic experiences in classrooms and into learning flows coursing through our daily lives. We will engage in continuous learning channels that are always accessible through location-based augmented reality (AR) and virtual reality (VR) experiences that are relevant to where we are at the moment. Personal AIs will guide our “continual education,” understanding our interests and needs to proactively reveal teachable moments that inspire, inform, and educate. Meanwhile, ultra-high-definition video streaming intersecting with low-latency, roaming telepresence robotics will dramatically expand the concept of “distant learning” as students remotely explore historical or scientific sites.

“With 5G, you can use AR and VR to learn at work, at home, from your commute, or anywhere else,” says Maya Georgieva, Director of Digital Learning at The New School and co-founder of Digital Bodies. “And machine learning and AI can contextualize and personalize the information.”

Imagine: Kindergartners at recess will develop a visceral understanding of physics through scientific explanations digitally superimposed on playground equipment. High schoolers in Tel Aviv can cap off an earth sciences unit by collaboratively controlling a telepresence robot inside an active volcano in Hawaii. A team of biology graduate students dispersed around the world will be able to simultaneously accompany scientists into the rain forests of Brazil via telepresence and then meet together in a virtual classroom to discuss their findings.

From remote apprenticeships and collaborative networks to content commons and algorithmic education, the networked learning economy will be integrated into the way we play and work. And perhaps most importantly, these learning experiences will be social, collaborative, and immersive to drive deep engagement.

“Every day can be a field trip,” Georgieva says.

Signal: Augmented Classroom

What: Blippar’s augmented reality creation tools Blippbuilder and Blippbuilder Script are easy drag-and-drop authoring tools for augmented reality that enable publication of the experiences to multiple mobile apps.

Signal: Museum of Other Realities

What: Described as “a new space for a new kind of culture,” the Museum of Other Realities is a fully immersive virtual exhibition hall for artists to share their experimental digital creations with the public and each other.

Signal: Reading, Writing, and Robots

What: Vecna Technologies’ VGo is a mobile telerobot controllable that “enables a person to replicate themself in a distant location and have the freedom to move around as if they were physically there.”

Signal: New Lens on History

What: Launched by BBC Arts to complement a new TV series about human creativity, Civilisations AR is a mobile augmented reality app that delivers, for example, an X-ray view into an ancient Egyptian mummy from the Torquay Museum or a digital “eraser” to see how an artwork has faded over the centuries.

Signal: Distance Learning Gets Social and Virtual

What: Immersive VR Education develops tools for educators “to create their own content in virtual classrooms or virtual training environments.”

Signal: Computer As Tutor

What: The IBM Watson Tutor uses natural language processing and machine learning in the cloud to provide students with personalized educational support. It’s based on the company’s cloud-based AI service that famously beat two champions of the Jeopardy! TV quiz show.

Prediction: Distributed Science and Networked Discovery

We will blanket the planet with devices that can see, hear, and sense once-inaccessible areas and hidden phenomena

“If 5G and edge computing is going to handle half of the processing and power management on a sensor, I don’t have to worry about it anymore. I can have a smaller device that uses less power, that perhaps does more and becomes more useful, and now it’s cheaper, so I can deploy more of them.”—Sean Bonner, Director and Cofounder, Safecast

Scientists and conservationists will soon gain access to unprecedented rivers of environmental data flowing through 5G networks. Miniaturized, inexpensive, wirelessly connected flocks of mobile scientific instrumentation with integrated sensor systems will measure and monitor rain forest health, air and water quality, climatic trends, atmospheric and ocean temperatures, greenhouse gases, radioactivity, archaeological digs, meteor showers, animal migration, and more.

5G’s low latency and massive real-time data-transfer capability, combined with AI and edge computing, will open new doors for telerobotic fieldwork, remote sensing for agriculture and conservation efforts, animal-migration patterns, climactic changes, ocean-current flow rates, and satellite monitoring to improve environmental, health, and disaster forecasting. Professional scientists will need as much public involvement as possible to find patterns and draw out insights from the massive amounts of raw data after first-cut machine-learning analysis at the network’s edge.

For example, Safecast is a non-profit organization in Japan that has deployed hundreds of mobile radiation sensors that volunteers attach to their cars and drones to take measurements all over the world. Safecast has recorded over 100 million radiation readings, and the database is used by scientists all over the world. Each sensor costs about $750, and much of that cost is for the onboard processing and power-management subsystems.

Acording to Safecast cofounder Sean Bonner, offloading the processing to an edge computing network environment and sending data over 5G could reduce the cost and power consumption of the Safecast devices. 5G will also make it easier and faster to broadcast firmware patches to hundreds or thousands of sensors in the field at once.

Today’s scientists are distributed and networked; tomorrow, their tools will be as well.

Signal: Roving Wireless Sensors

What: After the meltdown of the nuclear reactor in Fukushima, Japan, two hackerspaces in Tokyo and Los Angeles formed a partnership called Safecast to design, manufacture, and distribute mobile radiation monitors.

Signal: Science Data Gets a Backbone

What: The Pacific Research Platform (PRP) is a high-speed network designed especially for data-intensive scientific research. Larry Smarr, principal investigator of the PRP and director of the California Institute for Telecommunications and Information Technology, says that the 5G network’s optical backbone will be an important part of PRP.

Signal: Sea Sensors

What: Researchers from the Overturning in the Subpolar North Atlantic Program (OSNAP) deployed an array of undersea sensor probes across a large area of the Atlantic Ocean to better understand the currents in the North Atlantic.

Signal: Balloon-Borne Infrasound Sensors

What: Scientists at Sandia National Laboratories developed solar-powered hot air balloons with GPS trackers and infrasound sensors to detect sources of low-frequency sounds, such as explosions and seismic activity, and triangulate on their source. Flying at three times the altitude of a commercial jet, the balloons could potentially be used on other planets, like Venus and Jupiter.

Signal: Drone-Based Migration Monitor

What: Researchers went to Western Canada and used drones and machine-vision software to better understand migration behavior of caribou by tracking individual animals as they moved.

Signal: Pompeii Exploration Gets an Upgrade

What: The ancient Roman city of Pompeii, which was buried by ash when Mount Vesuvius erupted in 79 A.D., is so remarkably well-preserved that archaeologists have only begun to discover everything it holds. They are now studying one especially artifact-rich area, known as Regio V, using drones, georadar, lasers, and VR.

Prediction: Digital Twins

Advanced simulations connected to the physical world will drive innovation.

“As we move forward in the future, we can start to do much more predictive things with digital twins, and have engineering innovations by virtue of the information exchange that goes back and forth between the operational system and the digital-twin model.”—Donna Rhodes, Principal Research Scientist at MIT

Engineers, scientists, and designers have long used digital models in their work, but until recently, these models were isolated from the real world. The next decade will see the proliferation of digital twins, real-time digital models of our homes, cities, factories, and other environs that could enable predictive crystal- ball-like simulations.

“The digital twin is really the digital replica of the operational system,” says Donna Rhodes, a principle research scientist at MIT who runs a research group focused on advancing the design of large-scale, complex, sociotechnical systems.

What makes digital twins so much more useful than isolated models is that they are continuously being refreshed with sensor signals fed to them from the real world. Rhodes offers the example of a wind-turbine farm, which uses GE’s digital-twin technology. Each wind turbine is equipped with sensors, and the data is sent in real time to a computer simulation of the wind farm.

“The model can then be used in decisions,” says Rhodes, “like predicting when a part could be breaking down.”

In some cases, a physical twin must transmit an enormous amount of data to its digital twin. That’s where 5G’s blisteringly fast speed and low latency can make a big difference in boosting the verisimilitude of digital twins. In the coming years, digital twins will move from engineering labs and factory floors to the world at large. Ubiquitous high-end video cameras that leverage 5G and edge computing for image processing, combined with GPS and other kinds of sensors, will be installed in vehicles (both autonomous and human operated), mobile devices, machinery, and other things, all of which will collect data to create volumetric maps of the world. These photorealistic maps will be the foundation for a wide variety of telepresence, VR, and AR applications.

Signal: Ultrahigh-Resolution 3-D Models

What: Zeiss’s photorealistic 3-D scanner, called RealScan, creates extremely detailed digital copies of physical objects that can be viewed in near-microscopic detail.

Signal: 3-D Desktop Display

What: The Looking Glass is a non-headgear 3-D display that generates full-color superstereoscopic views of digital models and scenes, and is capable of displaying video at 60 frames per second.

Signal: Digital Twins That Learn

What: SWIM EDX is a software platform that automatically generates digital twins that observe, learn, and adapt to streaming data from complex real-time systems. The company recently used EDX to coordinate the behavior of an autonomous drone swarm.

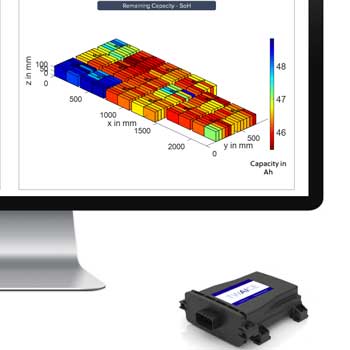

Signal: Smarter Smart Batteries

What: Twaice provides digital- twin solutions to optimize battery design, testing, and tuning for companies with fleets of electric vehicles, as well as for EV automakers. The virtual batteries can help these manufacturers detect problems before they begin to appear in the physical batteries.

Signal: Viewing Factories in VR

What: visualiz makes VR viewable models of entire businesses and factories, which are updated with real-time data from the physical assets and processes in the organization. Multiple remote users can interact with a model at the same time.

Prediction: Social Networks for Robots

Tomorrow’s robots will teach each other how to navigate our world.

“Robots will learn from their mistakes and share what they learn collectively so all of the robots improve over time.”—Ken Goldberg, UC Berkeley professor of robotics, automation, and new media

Over the next decade, new species of robots will roll, toddle, and stride into all aspects of our lives, changing the nature of how we work, live, and even interact with one another. edge computing and 5G networks will be the nervous system for robots that are no longer dependent on limited onboard computation or memory. Autonomous vehicles, drone swarms, smart manufacturing systems, and home robots will depend on high-speed, low-latency connections to the network for maps, statistical analysis, and motion planning.

Rather than count on task-specific algorithms, tomorrow’s robots will be deep learners, harnessing edge computing to process massive amounts of data in order to get smarter as they go about their business building widgets in highly automated factories, tending fields to optimize crop production, or cleaning our homes safely and efficiently. Most importantly, these robots will participate in social networks of their own to distribute their best practices

far and wide.

“No matter how much you program a robot, there will always be corner cases, things an autonomous vehicle doesn’t recognize, or objects a machine doesn’t know how to pick up,” says UC Berkeley professor Ken Goldberg. “If it can’t do something, a robot should use a combination of local and cloud processing to analyze the data and figure out what went wrong. Then, once it has learned something, it can update other robots over the network.”

This will be especially important as robots move outside the structured territories of factories, laboratories, and office parks and into the unpredictable environments where most humans live. Of course, the ability of robots to share data about our daily lives—photos or audio recordings, for example—means that extra precautions will need to be taken to ensure privacy and security. After all, just because we invite robots into our homes doesn’t mean we want them gossiping about us with their online friends.

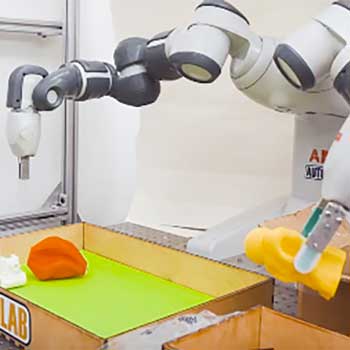

Signal: Helping Hand for Robot Grippers

What: Developed by UC Berkeley researcher Ken Goldberg and colleagues, Dex-Net as a Service (DNaaS) is a system that uses cloud computing at a central server to calculate robust grasps for object shapes that are uploaded.

Signal: Robot Delivery

What: Starship Technologies’ small robots deliver food and parcels on corporate and academic campuses. Currently, the robots travel autonomously, although a remote operator can intervene as necessary.

Signal: A Car’s-Eye View

What: The VTT Technical Research Centre of Finland and its partners prototyped the 5G-Safe system for autonomous vehicles to share 3-D views of road conditions and other sensor data between other cars, road operators, and safety services.

Signal: Automating Agriculture

What: Soft Robotics, Inc. is developing new applications of its robotic grippers and control systems to automate the harvesting of fruits and vegetables.

Signal: Cloud Robotics in the Sky

What: Rapyuta Robotics’ io.drone is an enterprise drone system for applications from security and agriculture to construction and emergency response.

Prediction: Augmented Cognition

Tomorrow’s experts will be human-computer hybrids.

“Augmenting high cognitive-load tasks with artificial intelligence brings out the best in human operators. From our work at Synapse Technology, we’ve seen our operator-assist platform significantly increase human job satisfaction, decrease time per task, and increase accuracy rates.”—Ian Cinnamon, cofounder and President, Synapse Technology Corporation

The best chess player in the world is not a human being. It’s not a computer, either. The best chess player in the world is a centaur. This term is used by chess players to describe a human who partners with a computer program to compete in anything-goes freestyle chess tournaments. Centaurs exist outside of chess, too. The best brain-tumor diagnostician is a centaur: a doctor working with an AI software system.

AI-human hybrid intelligence is superior to a lone human or robot because it has an unbeatable combination of a human being’s broad knowledge, flexibility, ethical compass, and ancient evolved instincts along with the blindingly fast speed and relentlessness of machine logic. The advent of 5G networks will deepen the human-machine relationship, giving rise to millions of human/AI centaurs who exceed the capabilities of individual human and AI programs —we’ll have centaur weather forecasters, centaur soldiers, centaur students, centaur fashion designers, centaur artists, and more. Instead of fearing a robot revolution, we can look forward to working alongside intelligent machines designed to help us successfully achieve our goals.

“In most business situations, our experience has shown that approximately one-third of decisions are optimal, one-third are less than optimal because of limited information, and another third end up being wrong,” writes Jesus Mantas, general manager of cognitive-process transformation at IBM Global Business Services. “Cognitive augmentation of processes therefore leads to significant efficiency because of better decision-making, which typically leads to higher job satisfaction and lower attrition rate in the roles augmented by AI.”

In a way, we are already augmented cyborgs—anyone who uses a smartphone to look up information is one. Over the next decade, 5G and edge computing will underpin the formation of new human-machine partnerships that will help us make better decisions, be more creative, learn more efficiently, and achieve goals more quickly.

Signal: Scanning Packages for Threats

What: Every single day, billions of packages and pieces of luggage must be scanned by human screeners for weapons, explosives, and other dangerous materials. Synapse Technology has created an augmented cognition system that uses deep learning and machine vision to analyze the contents of packages for threats and communicates with human scanners through a friendly user interface.

Signal: Virtual Surgery for Real Patients

What: At the Royal London Hospital, physician Shafi Ahmed performed cancer surgery while consulting with two other remote surgeons in augmented reality using Aetho’s Thrive telepresence platform.

Signal: Unmanned Aerial Teams

What: Defense forces have long used unmanned aerial vehicles, but recently the US Army has been deploying teams of manned and unmanned aerial vehicles to increase the likelihood of a successful mission.

Signal: Designs That Evolve

What: Autodesk is incorporating generative design into its CAD products. A human designer specifies constraints for a part (such as weight, size, and strength, and the software mimics the processes of biological evolution to

generate designs that meet the specifications.

Signal: Cyborgs on the Battlefield

What: The US military has entered into “a new era of human-machine collaboration and combat teaming,” said Deputy Secretary of Defense Bob Work in a statement about AI tools that will process large streams of incoming data and suggest courses of action to military personnel.

Like this article? Download a PDF copy.

ABOUT IFTF

Institute for the Future (IFTF) is celebrating its 50th anniversary as the world’s leading non-profit strategic futures organization. The core of our work is identifying emerging discontinuities that will transform global society and the global marketplace. We provide organizations with insights into business strategy, design process, innovation, and social dilemmas. Our research spans a broad territory of deeply transformative trends, from health and health care to technology, the workplace, and human identity. IFTF is based in Palo Alto, California. For more, visit www.iftf.org.

ABOUT AT&T COMMUNICATIONS

We help family, friends and neighbors connect in meaningful ways every day. From the first phone call 140+ years ago to mobile video streaming, we innovate to improve lives. We have the nation’s largest and most reliable network and the nation’s best network for video streaming.** We’re building FirstNet just for first responders and creating next-generation mobile 5G. With DIRECTV and DIRECTV NOW, we deliver entertainment people love to talk about. Our smart, highly secure solutions serve over 3 million global businesses—nearly all of the Fortune 1000. And worldwide, our spirit of service drives employees to give back to their communities.

AT&T Communications is part of AT&T Inc. (NYSE:T).

Learn more at att.com/CommunicationsNews.

**Coverage not available everywhere. Based on overall coverage in U.S. licensed/roaming areas. Reliability based on voice and data performance from independent 3rd party data.

AT&T, the Globe logo and other marks are trademarks and service marks of AT&T Intellectual Property and/or AT&T affiliated companies. All other marks contained herein are the property of their respective owners.

© 2018 AT&T Communications, Institute for the Future. SR-2028B | CC BY-NC-ND 4.0